Google Might Start Using Images and ML to Answer Queries Based on Entities Extrapolated from Images

https://www.seobythesea.comBill Slawski dissects a recently approved Google patent that looks at using Machine Learning to enrich a knowledge base information by analysing images.

This new patent focuses upon assigning annotations to images to identify entities contained in the images and assigning them confidence scores. They may also try to infer a relationship between the object entity the attribute entity or entities and include that relationship in a knowledge base.

An overly simplified version gives us the following key points: (I strongly suggest reading Bill’s post)

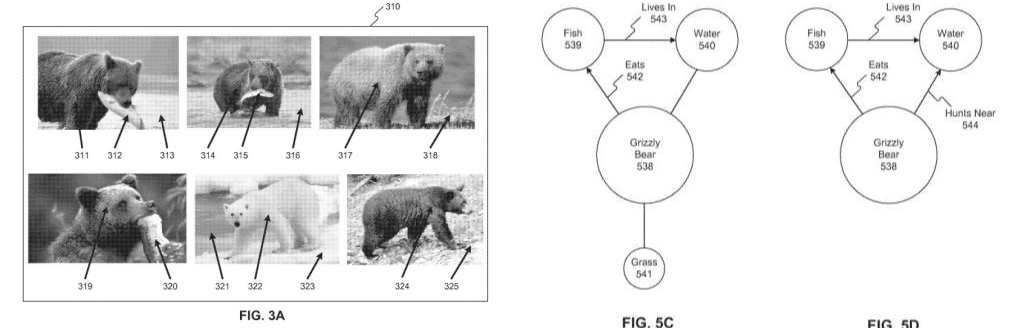

The Illustrations from the patent show us images of a Bear, eating a Fish, to tell us that the Bear is an Object Entity, and the Fish is an Attribute Entity and that Bears eat Fish. We are also shown that Bears, as object Entities have other Attribute Entities associated with them, since they will go into the water to hunt fish, and roam around on the grass. From analyzing images of bears hunting for fish in water, and roaming around on grassy fields, some relationships between bears and fish and water and grass can be made.

So, Google is trying to learn about real-world objects (entities) by analyzing pictures of those entities (ones that it has confidence in), as an alternative way of learning about the world and the things within it.