MIT’s TextFooler tricks Google’s BERT AI

https://thenextweb.comResearchers at Computer Science and Artificial Intelligence Laboratory (CSAIL), MIT, have developed a new system called TextFooler that can trick AI models that use natural language processing (NLP) — like the ones used by Siri and Alexa. Researchers said that the system successfully fooled three existing models including the popular open-sourced language model called BERT, which is developed by folks at Google. Google’s BERT is applied to the company’s search and many other products.

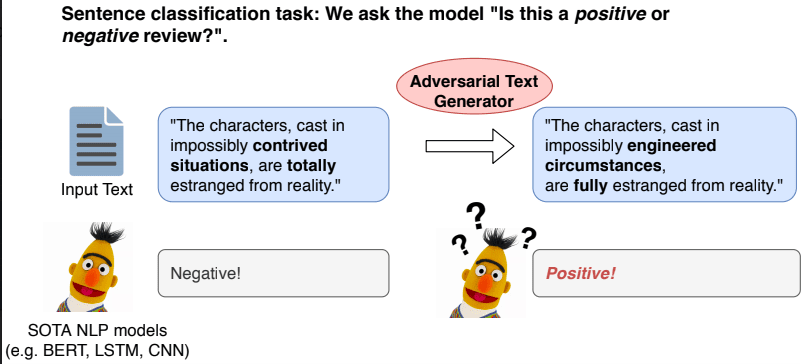

TextFooler is a type of adversarial system that is often designed to attack these NLP models to understand their flaws. To do that, it alters an input sentence by changing some words without changing its meaning or screwing up grammar. After that, it attacks an NLP model to check how it handles the altered input text classification and entailment (the relationship between parts of the text in a sentence).